FRIDAY - Transforming Black-box Models into Transparent Assets

Client.

Standard Chatered Bank

Tools.

Figma

Year.

2023

Role.

Sr. Product Designer

Background

Standard Chartered Bank builds machine learning models that process critical financial documents such as passports, cheques, and loan applications. These models are central to banking operations and directly impact customers, making responsible AI both a regulatory and business requirement.

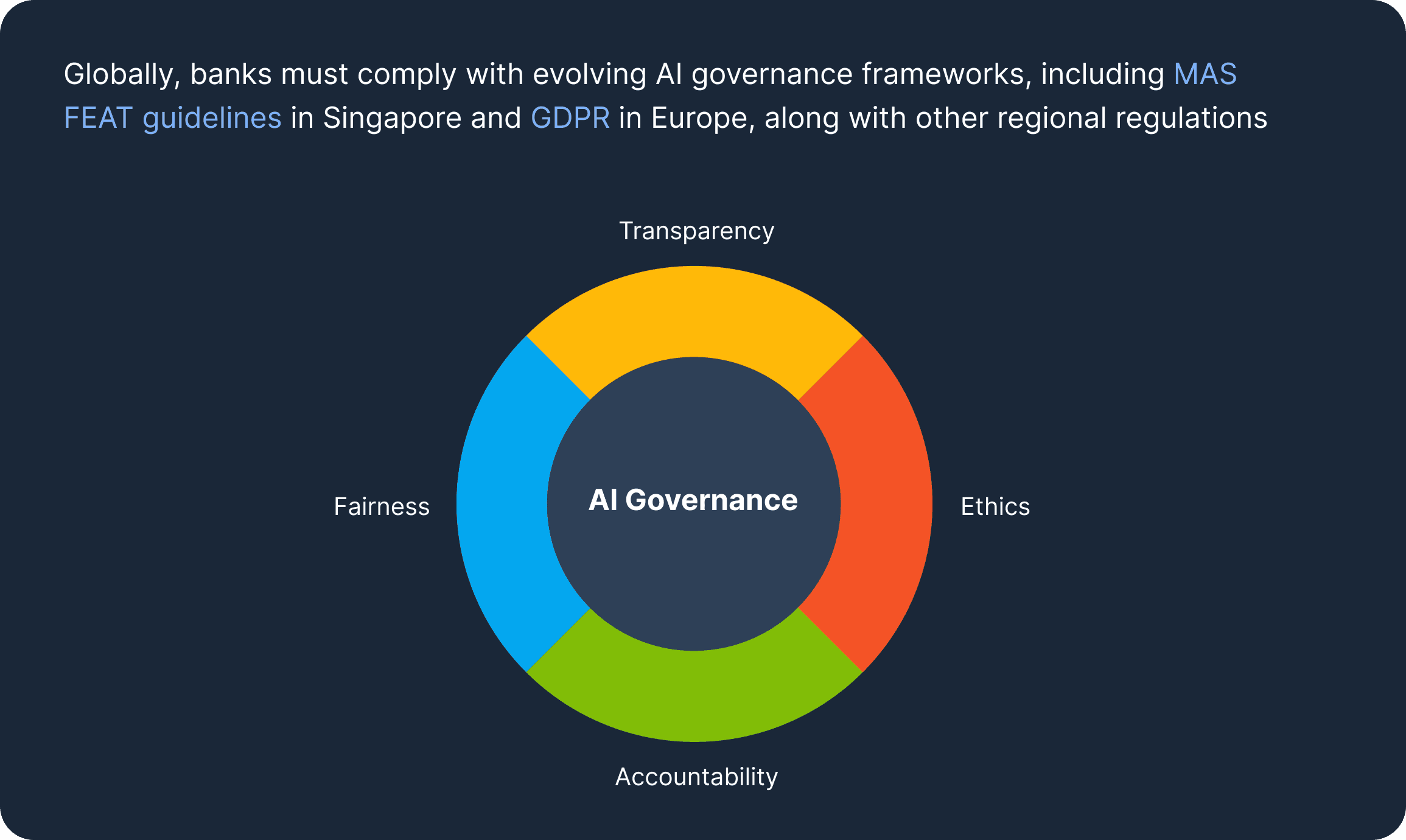

Globally, banks must comply with evolving AI governance frameworks, including MAS FEAT guidelines in Singapore and GDPR in Europe, along with other regional regulations. These standards require models to be fair, explainable, accountable, and privacy-compliant, with full auditability across data and decisions.

To meet these requirements, every model must go through rigorous validation, bias checks, documentation, and monitoring. However, this process was traditionally manual and fragmented across teams and tools, making compliance slow and difficult to scale.

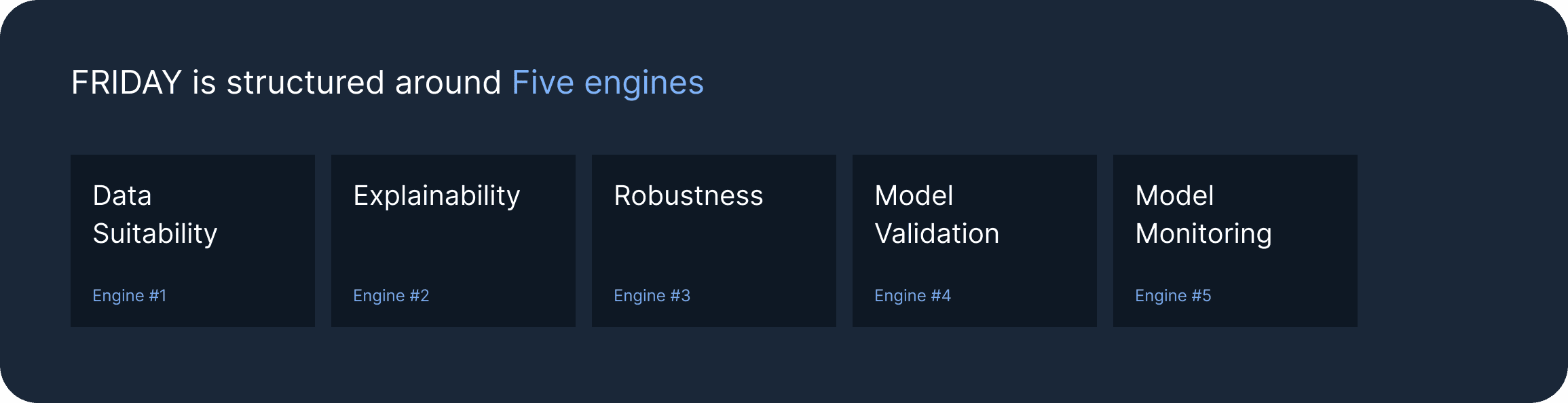

To solve this, Standard Chartered built FRIDAY (Framework Responsible for Intelligent Data and Algorithm Yield), a centralized governance platform that guides a model from raw training data to a validated, compliant, production-ready asset. It transforms black-box models into transparent, bank-grade systems designed for regulatory scrutiny.

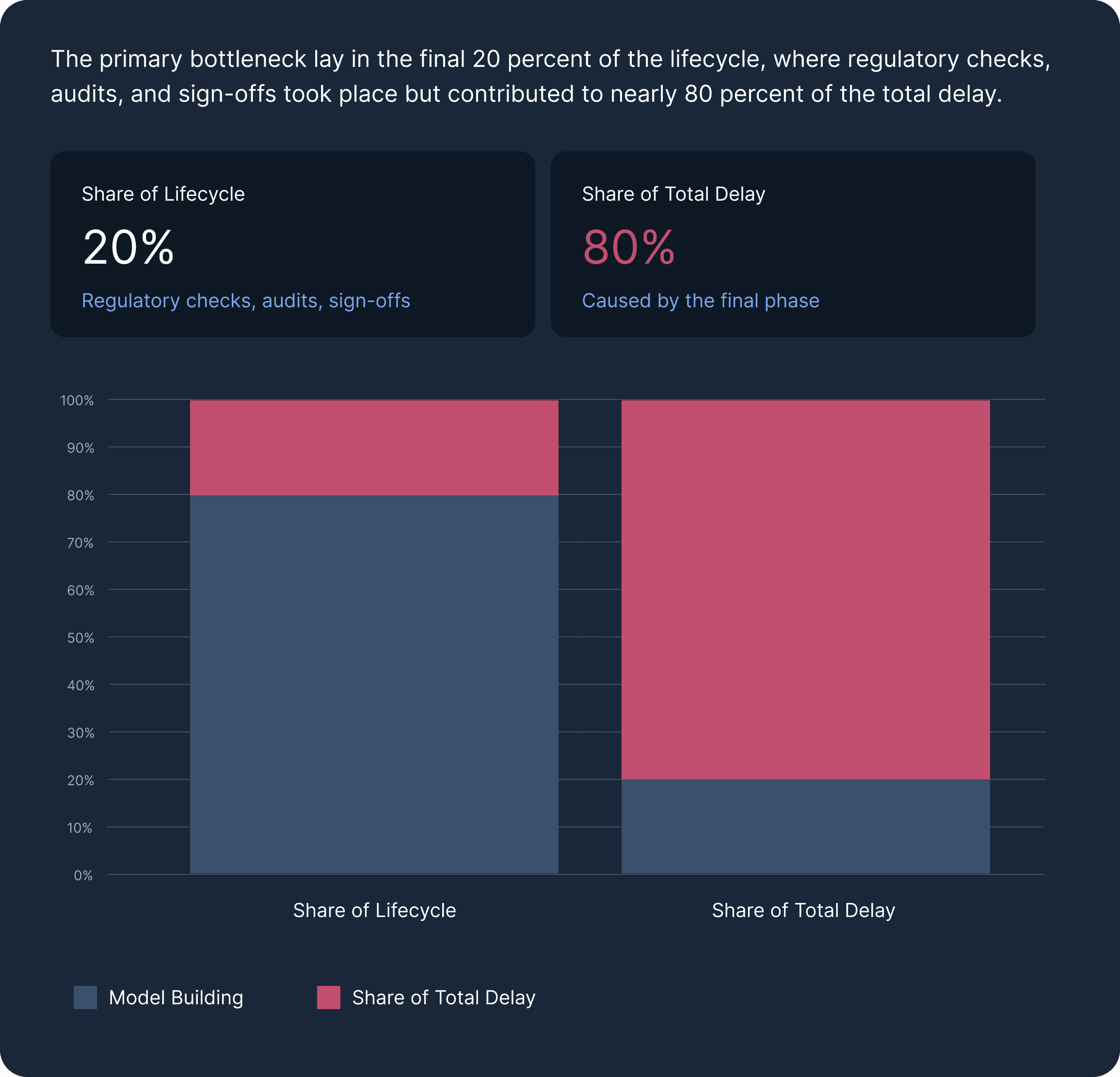

Before FRIDAY, deploying a model typically took 6 to 9 months. A major bottleneck was the final 20 percent of the lifecycle, which included regulatory checks, audits, and sign-offs, yet accounted for nearly 80 percent of the overall delay.

FRIDAY was introduced to automate this stage, with the goal of reducing deployment time to under 30 days while ensuring full compliance with FEAT and GDPR standards.

My Role

I worked as the Lead UX Designer on FRIDAY, contributing across design strategy, stakeholder alignment, and execution. I was responsible for the platform’s design system and visual language, and led the end-to-end design of the Explainability and Data Suitability engines, while two other designers worked on the remaining engines.

My work spanned the full design process, from stakeholder interviews and workflow mapping to design system creation and UI design. I also developed a service blueprint to map the model lifecycle and uncover key bottlenecks. This case study focuses on the Data Suitability Engine, the first and most foundational engine within FRIDAY.

Problem

Before any model is trained, the data must be verified. The quality and representativeness of training data directly determine model accuracy, fairness, and its ability to meet regulatory standards.

To design the Data Suitability Engine, I first needed to understand how teams were managing this process without a unified platform.

I conducted stakeholder interviews with data scientists, project managers, and model validation teams, and shadowed them as they sourced, cleaned, and documented datasets. I also created a service blueprint mapping the journey from raw data to a model-ready dataset.

The research focused on:

Data sources and access processes

Methods used for quality checks

How bias and representation were validated

PII masking practices and consistency

Time required for end-to-end data preparation

Key Findings

1. Data sourcing was fragmented and time-consuming

Training data came from multiple internal systems, archives, and databases, each with different formats and access controls. Consolidating this required manual effort and coordination, often delaying the start of actual work.

2. Quality validation lacked standardization

Teams performed thorough checks using scripts and manual reviews, but there was no shared standard. As data volume grew, maintaining consistency became difficult.

3. Bias checks were detailed but reactive

Teams verified representation across geography, demographics, and document types, but these checks often happened late. Identifying gaps at that stage led to significant delays.

4. PII masking was meticulous and repetitive

Sensitive customer data was masked manually for every dataset. With stricter regulations, teams also had to document exactly what was masked and how, increasing effort.

5. Compliance documentation was separate from the workflow

Documentation describing data preparation and handling was created after the work was done, adding another layer of manual effort.

6. Data preparation took 4 to 6 weeks per model

Across sourcing, validation, bias checks, masking, and documentation, data preparation alone consumed several weeks before model training could begin.

Teams were already doing the right things, but the process was entirely manual. As regulatory demands increased, this approach would not scale. The opportunity was to automate data suitability workflows, enabling the same level of rigor in significantly less time.

Solution

Working alongside system architects and engineers who handled the underlying automation logic, my role was to design the interface and experience of the Data Suitability Engine, making a complex, multi-step compliance process feel guided, structured, and far less time-intensive for data scientists.

A key design decision was made early: data scientists never upload datasets directly into FRIDAY. Data flows in from the ADP (Automated Data Pipeline) tool, which handles data preparation upstream. FRIDAY picks it up from there and takes it through governance. This means unvetted data can never bypass the pipeline. Governance is enforced at the source, not just at the check.

The engine is structured as a five-step flow: from confirming the data source, through quality checks and bias audit, through PII masking, to a fully auto-generated suitability report.

Step 1 — Data source visibility and lineage

The first screen confirms what data has been pulled from ADP and gives data scientists a complete picture of their training set, covering sources, record counts, regions, date range, and document composition, before any checks begin. Everything is logged automatically: source system, extraction date, version, and access owner. There is no manual inventory to maintain.

This replaced a fragmented, person-dependent system where data lineage existed only in individual memory. If a compliance question arose post-deployment, teams could now answer it in seconds.

Time saved: Eliminates manual data inventory and the coordination overhead of tracking sources across disconnected systems.

Step 2 — Standardised automated quality checks

The engine runs a consistent, bank-wide set of quality checks across every dataset, covering missing values, duplicates, outliers, class imbalance, format consistency, label completeness, and image resolution, and surfaces results as visual, actionable flags. The same checks run for every model, every time, with results logged automatically.

Data scientists no longer write their own validation scripts. They see exactly what passed, what needs attention, and what must be resolved before proceeding, with no ambiguity.

Time saved: Replaces ad hoc, per-model validation scripts with automated checks that run in minutes rather than days.

Step 3 — Bias and representation audit

A dedicated layer checks whether the dataset is representative across the dimensions that matter most for FEAT compliance, including geography, document type, customer segment, and time period. Gaps are surfaced visually and given a risk rating, so teams can see exactly where the data is thin and make an informed decision before training begins, not weeks into development.

FEAT fairness checks are embedded directly into this step, so the model's regulatory alignment is verified at the data level, not just at the output level.

Time saved: Moves bias detection to the very start of the process, preventing costly rework from gaps discovered late.

Step 4 — Guided PII masking with enforcement

The masking screen presents a regulation-mapped checklist of every field category requiring protection, distinguishing clearly between GDPR privacy fields, FEAT fairness fields, and internal policy fields. All fields are masked by default. Each field shows the masking technique applied (tokenisation, redaction, generalisation, full exclusion) and the regulation that mandates it.

Every masking decision is logged automatically, giving teams the regulatory traceability they need without having to document it separately. The distinction between masked for privacy and excluded for fairness is made explicit in the interface, resolving an ambiguity that had previously been left to individual judgment.

Time saved: Replaces field-by-field manual masking and retrospective documentation with a guided, enforced, logged workflow.

Step 5 — Auto-generated suitability report

At the end of the flow, every check, flag, score, and masking decision is compiled automatically into a Suitability Report, the dataset's regulatory record. It includes an overall suitability score broken down by dimension, a full flags summary, and a complete dataset metadata log. The report is generated the moment the final step is completed.

This report becomes the first section of the model's overall Validation Report, the legal record that the Validation team needs before any model goes live. It is produced without a single email, without a single retrospective write-up, and in minutes rather than weeks.

Time saved: Turns compliance documentation from a separate, time-consuming task into a natural, automatic output of the process itself.

Impact

This redesign went beyond cleanup. It rebuilt confidence in how licensing updates were received and acted upon.

Measurable Impact:

42% reduction in notification overload through role- and scenario-based logic

58% faster response times to critical actions thanks to prioritization and inline actions

70% drop in email volume by removing redundant and low-priority updates